We should start working on Artificial Intelligence again

This title probably feels quite contradictory to what you have encountered yourself. It seems impossible to go on LinkedIn without encountering an AI expert in your first minute of scrolling. Or, if you distance yourself from this pretentious medium, I'd bet that you can't go a day without hearing someone mention what AI or chat told them. We are riding an AI hype-cycle, full force. AI aficionados are everywhere, and whether they are telling you that AI will take your job, or revolutionize your field, they always seem to refrain from telling you what in the hell an Artificial Intelligence is. Most people just don't know, and many are confidently wrong about it. So let's get into this, so you'll get a better grasp of what it is, and why we need to start working on it again.

What is Artificial Intelligence?

Artificial intelligence is the study of trying to mimic human intelligence. This is the definition which the field was founded on (see the Dartmouth Workshop) and which I will follow for the remainder of this piece. Things immediately get a bit fuzzy in this definition though. Namely, because human intelligence is quite difficult to define in itself, and how can we mimic something if we don't know what we are trying to mimic? Although IQ tests are one of our primary ways to quantify it, they most definitely don't cover all aspects. Defining intelligence is at the core of AI, and there are many different views on how this should be done. For a good overview, I refer you to this article. TLDR: it is generally agreed that being intelligent requires one to be able of:

- Achieving goals across diverse environments through learning and adaptation

- Acquiring and applying knowledge, and solving problems creatively

- Self-reflection and understanding social and emotional cues

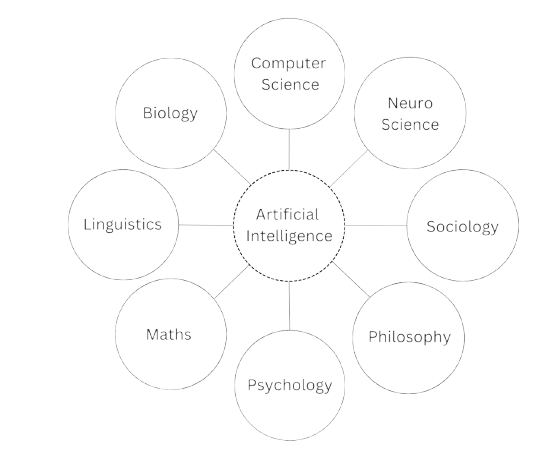

Calling someone or something intelligent is thus quite the compliment. It's very multi-faceted, and recreating it thus also becomes a multi disciplinary problem. This multidisciplinary nature shapes what artificial intelligence is: a scientific field at the crossroads of many more traditional sciences. We can't answer the central question of how one recreates human intelligence, if we don't go into these other fields:

- Biology: How can we recreate intelligence if we don't understand how internal processes work in living organisms?

- Neuroscience: The brain is the organ smart people have in abundance, but how does it actually function?

- Computing Science: How do we efficiently represent and process information?

- Mathematics: Patterns, probabilities, and logic are at the heart of reasoning. How do they work?

- Psychology: How do humans perceive, learn, and make decisions?

- Sociology: Intelligent behavior doesn't happen in isolation. How do humans interact, communicate and form societies?

- Philosophy: How should intelligent systems behave, and can they ever be conscious?

- Linguistics: How do we build a meaningful language, because isn't that a large part of what sets humans apart from other species?

To me, nothing is cooler than combining expert knowledge like this with a clear, applied goal. And a goal that, if achieved—recreating human intelligence—would be a revolutionary breakthrough. True intelligence could accelerate discoveries beyond what humans alone can do, modeling complex systems, predicting outcomes, and generating innovations faster than ever before. And it wouldn't just be a tech milestone, it would force us to rethink what it means to know, think, and even exist.

What's curious, though, is that by following this definition most things branded as 'AI' today don't qualify.

- That 'smart' chatbot you spoke with? Not AI.

- Your movie or music recommendations on netflix or spotify? Nope, not AI either.

- A robot doing a dance you saw online? You guessed it: not AI.

These systems are optimized purely for getting a specific job done effectively, not for possessing anything resembling intelligence. They don't reason or think, nor do they understand the problems they're solving. Generally, products like these are built upon techniques found in subfields of AI like machine learning or deep learning. These have become almost synonymous with AI in common discussion, largely because they've delivered products that solve users' problems.

Take chatbots, for example. Systems like ChatGPT, Claude, and Gemini are most often held up as examples of AI, when they are in fact nothing more than a purely statistical approach to modeling language. In practice, they are stochastic parrots, meaning they repeat outputs based on probabilities learned from massive amounts of text and lack any true comprehension of what they are generating. Don't get me wrong, this turns out to have use cases; software development and search rank highest among them. What's more, they are inherently scalable, which is a large part of their success. However, they are in my opinion merely an artifact of a large scientific field, and are not equivalent to what AI is.

So how come? Why do the systems we brand as 'AI' today have so little to do with what the field originally set out to achieve? And more importantly, if real AI could deliver a revolutionary breakthrough, why does nobody seem to be working on it?

Enjoying this deep dive into AI? Subscribe to get my new articles and scientific research delivered to your inbox.

Why is no one working on AI?

As you might've already figured, this statement is missing a bit of nuance. People do recognize that recreating intelligence is an interesting and worthwhile quest—many companies have been founded with this exact goal. Unfortunately, most end up abandoning that mission. The process they follow is actually rather straightforward:

- 1. A company or lab is founded with good intentions: an enthusiastic group of people sets out to figure out how they might create something intelligent.

- 2. An R&D phase begins. New insights emerge, and there are many promising leads to pursue in the quest for intelligence.

- 3. Whether expected or not, reality hits: this mission will take far more time and money to reach anything substantial. Some of the existing research, however, could be turned into a functional product to help raise funds.

- 4. Getting this off the ground requires more capital than is available, so investors enter the picture and take a stake in the company in exchange for equity.

- 5. The race begins: investors want to see returns within a certain timeframe, and the company must focus on becoming profitable.

- 6. Scale whatever achieves product market fit. It might not resemble the original vision at all. Consumer behavior simply guides what sells, so whatever sells gets scaled.

- 7. The main focus becomes the new product; the initial mission fades. Consumers only know the company for the successful product that emerged, not the original goal.

It is almost impossible to believe now, but OpenAI (the company behind ChatGPT) was founded to research and create AI for good. I think we can safely say they have moved on from this. Whereas they started out with publishing intelligence research on the regular, their focus has shifted dramatically toward commercial applications. Of course, it's easy to blame OpenAI for selling out, which I do. However, within our capitalist and consumer focused system, the seven steps I outlined are a logical path to take given the factors at play. Investors want returns, consumers want their short term problems solved, and engineers want to work on things that deliver visible results and rewards. It is very difficult to keep focus, let alone complete what you set out to do initially.

Another great example, which is close to my heart, is computer chess. Early on in its history the hope was that if we could build a strong chess playing machine, we would gain insight into human intelligence. The mission was not really to build a good chess machine for its own sake, but to learn something about the human species. In the end, simply building faster chips turned out to be the most effective way to beat human players, not constructing a human-like model of chess. As soon as this became clear, building the best performing chess model became the goal, because that was what actually garnered attention and recognition. IBM put itself on the map when it built the model that beat the world champion in classical chess, even though that model, which was called Deep Blue, had nothing to do with how humans play chess. As Garry Kasparov, the world champion in question, put it: Deep Blue was intelligent the way your alarm clock is intelligent.

Clearly, we do not always stay true to what is actually best for research or for consumers in the long term. This is exactly why a well intentioned project like the Transformer, which is the main building block of every chatbot you interact with, has led to a kind of stalemate in natural language processing. I would argue that it worked too well, and that its success has discouraged us from pursuing smarter and more biologically plausible ways of modeling language beyond next-token prediction. In that sense, it may have slowed long-term progress.

I'm not arguing against optimizing for performance. Planes don't flap their wings, after all, and we might still create something far more capable than ourselves without mimicking human intelligence at all. Transformers solve real problems across almost every major field, and the past few years have delivered incredible products that continue to improve. But this can still go hand in hand with returning our focus to actual artificial intelligence. I'm convinced we can still make meaningful leaps in modeling language more effectively, and more importantly, in understanding it at a deeper level. Just building more on top of transformers won't work forever; it's simply too limited of a model. For instance, your average transformer has billions of parameters, while a cat uses only about 250 million neurons to become a much more intelligent organism. Even the inventor of Transformers has decided to shift focus toward a new major breakthrough and is therefore likely returning to more fundamental AI work. Fortunately, he's not alone in this. There are ongoing initiatives which are still pursuing genuine AI, and I want to close by highlighting three approaches which I deem to be particularly promising, along with concrete examples of the ongoing work in each area.

Where to look for AI progress?

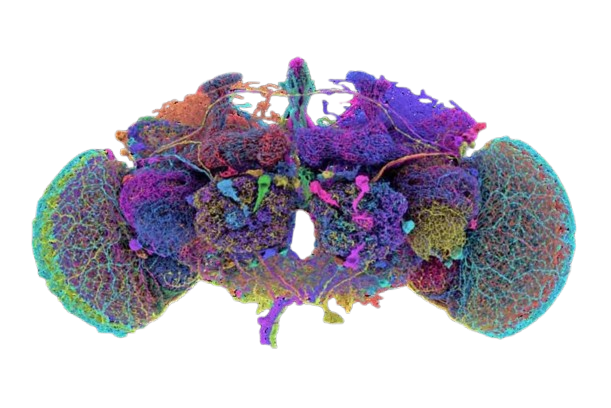

I'd argue that the people furthest along in building a proper and functional framework for intelligence are at Applied Brain Research and the University of Waterloo. They are trying to build a cognitive architecture based on abstract representations of processes in the brain and their interactions. In researching this, they've built SPAUN, the closest thing to a working simulation of a brain at a cognitive level. It is capable of recognizing digits, recalling sequences, and performing reasoning tasks. It demonstrates how thinking, memory, and reasoning can emerge naturally from neural activity, rather than being imposed from the outside. In other words, it helps us understand how the brain itself might perform cognitive tasks, which is a big step toward building AI that thinks more like humans.

The neuromorphic approach takes this even further: rather than modeling cognitive functions, it attempts to recreate the brain at its deepest physical level. The idea is that if we model it accurately enough, the true functionality of the brain will emerge naturally from the system. An example of this is the company Numenta, founded by Jeff Hawkins. Their work translates neuroscience into computation using a framework called Hierarchical Temporal Memory (HTM). HTM is inspired by how neurons in the neocortex actually work: it models thousands of synapses on dendrites and uses very efficient ways to encode information, allowing the system to continuously learn, predict temporal sequences, and form a kind of memory.

The last approach I'll cover comes from one of deep learning its founding fathers, Yann LeCun—a figure worth reading up on in his own right. He's been vocal that LLM's will plateau and have nothing to do with real intelligence. He's also the creator of the CNN, a revolutionary vision model which is based on the human retina. Currently at META's well-funded FAIR lab, he's exploring a predictive approach to intelligence called JEPA. JEPA is built on the philosophical assumption that intelligence is the ability to predict and model the world internally. Instead of simply repeating patterns or memorizing data like many machine learning systems, JEPA learns to anticipate what will happen next in a scene or situation. If you show it as part of a scene, it can predict what objects are likely to appear or how things will move, without explicitly seeing every detail. This approach is promising because it mirrors one of the key features of human intelligence: the ability to simulate and reason about possible futures.

The examples mentioned all have something in common: they are fundamentally research-driven, not product-oriented. They require immense resources and patience, with no guarantee of quick returns. This kind of fundamental research needs our support, not just our attention when it produces the next viral chatbot.

If we want to take the next steps toward truly capable AI, we need a climate that supports patient, curiosity-driven research, long-term funding, and the freedom to explore multiple paradigms simultaneously. Yes, transformers and similar technologies will continue delivering value, and we are only at the beginning of integrating them into our daily lives. However, I'm convinced the future belongs to approaches that seek to understand and replicate the principles of intelligence itself. By keeping these research paths alive and supported, we stand the best chance of creating real, but artificial, intelligence.

Want to get notified when I post something new? .